On this post you will find what a Hermitian matrix is, also known as self-adjoint matrix. You will find examples of Hermitian matrices, all their properties and its formula. Finally, we also explain how to decompose any complex matrix into the sum of a Hermitian matrix plus an skew-Hermitian matrix.

Table of Contents

What is a Hermitian (or self-adjoint) matrix?

The definition of Hermitian matrix is as follows:

A Hermitian matrix, or also called a self-adjoint matrix, is a square matrix with complex numbers that has the characteristic of being equal to its conjugate transpose. Thus, all Hermitian matrices meet the following condition:

Where AH is the conjugate transpose of matrix A.

See: how to find the complex conjugate transpose of a matrix.

Curiously, this type of matrix is named in honor of Charles Hermite, a 19th century French mathematician who did important research in mathematics, particularly in the field of linear algebra.

The reason for naming this matrix was that he showed that the eigenvalues of these peculiar matrices are always real numbers, but we will explain this in detail below in the properties of Hermitian matrices.

Examples of Hermitian matrices

Once we have seen the meaning of Hermitian matrix (or self-adjoint matrix), let’s see some examples of Hermitian matrices of different dimensions:

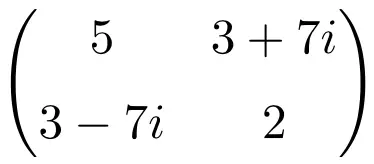

Example of a 2×2 dimension Hermitian matrix

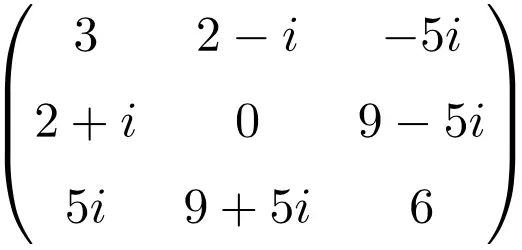

Example of a 3×3 dimension Hermitian matrix

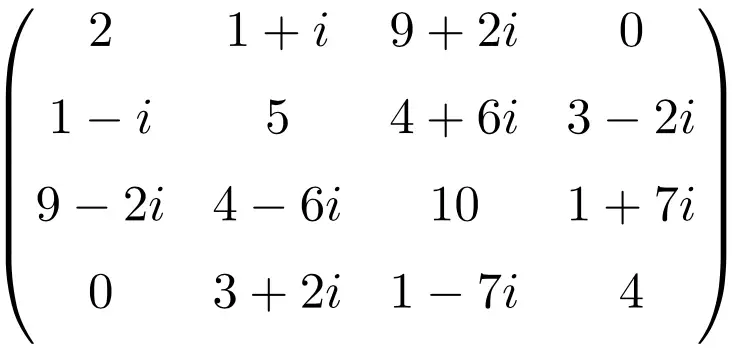

Example of a 4×4 dimension Hermitian matrix

All of these matrices are Hermitian because the conjugate transpose of each matrix is equal to each matrix itself.

Hermitian matrix properties

Hermitian matrices have the following characteristics:

- Every Hermitian matrix is a normal matrix. Although not all normal matrices are hermitian matrices.

- Any Hermitian matrix is diagonalizable by a unitary matrix. Also, the obtained diagonal matrix only contains real elements.

- Therefore, the eigenvalues of a Hermitian matrix are always real numbers. This property was discovered by Charles Hermite, and for this reason he was honored by calling this very special matrix Hermitian.

- A Hermitian matrix has orthogonal eigenvectors for different eigenvalues. And this type of matrices always have an orthonormal basis of

made up of eigenvectors of the matrix.

- Every real symmetric matrix is also Hermitian. For, example the 2×2 identity matrix .

- A Hermitian matrix can be expressed as the sum of a real symmetric matrix plus an imaginary skew-symmetric matrix.

- The addition (or subtraction) of two Hermitian matrices is equal to another Hermitian matrix, since:

- The result of the product of a Hermitian matrix and a scalar results in another Hermitian matrix if the scalar is a real number.

- The product of two Hermitian matrices is generally not Hermitian again. However, the product is Hermitian when the two matrices commute, in other words, that the result of the multiplication of both matrices is the same regardless of the order in which they are multiplied, since then the following condition is satisfied:

- If a Hermitian matrix is a nonsingular matrix, the inverse of this matrix also turns out to be a Hermitian matrix.

- The determinant of a Hermitian matrix is always equivalent to a real number. Here is the proof of this property:

Therefore, if :

Therefore, for this condition to be met, it is necessarily mandatory that the determinant of a Hermitian matrix must be a real number. Thus, the conjugate of the result is equal to the result itself.

Formula for a Hermitian matrix

Hermitian matrices have a very easy to remember formula: they are formed by real numbers on the main diagonal, and the complex element located in the i-th row and the j-th column must be the complex conjugate of the element that is in the j- th row and i-th column.

Here are several examples of the application of the Hermitian matrix formula:

Hermitian matrix of order 2

Hermitian matrix of order 3

Hermitian matrix of order 4

Decomposition of a complex matrix into a Hermitian and a skew-Hermitian matrix

Any matrix with complex elements can be decomposed into the sum of a Hermitian matrix plus another skew-Hermitian matrix. But to do this we must know the following peculiarities of these types of matrices:

- The sum of a square complex matrix plus its conjugate transpose results in a Hermitian matrix.

- The difference between a square complex matrix and its conjugate transpose results in a skew-Hermitian matrix.

- Therefore, all complex matrices can be decomposed into the sum of a Hermitian and a skew-Hermitian matrix. This theorem is known as the Teoplitz decomposition:

Where C is the complex matrix that we want to decompose, CH its conjugate transpose, and finally A and B are the Hermitian and the skew-Hermitian matrices respectively into which matrix C is decomposed.